Data can be automatically extracted from another system and loaded onto the local system using the remote data extraction functionality. Using this technique live data can be extracted and then automatically transferred to the development or test system ready to be used for testing.

The actual methodology involves the data initially being extracted into a temporary library prefixed with @RDB which is then converted into a save file and transferred via FTP or manually to the local system. Here if the target library does not already exist, the save file is restored to a library of the same name as the target library and the process is complete. If it does exist the save file is restored to a library prefixed with @RST. If the Data Option is *REPLACE the files in the target library that are being extracted will be cleared. Then for both *ADD and *REPLACE extractions the data is copied from the @RST to the target library.

Remote extraction can only be used if you are running a licensed version of TestBench or Extractor on the local system.

Pre-Requisites

The required prerequisites are as follows:-

• One of the Original Software products which provide extraction functionality such as TestBench or Extractor is present on the remote system. This must be the same version as the product running on the local system.

• There is an IP connection to the remote system.

• A Relational Database Directory Entry has been created for the remote system using the command ADDRDBDIRE. Existing entries can be viewed using DSPRDBDIRE.

• Verify that the Distributed Data Manager (DDM) server job is started on both the local and remote systems. Key in the command WRKACTJOB and locate the server job called QRWTLSTN in subsystem QSYSWRK. If this server job cannot be found, key in the following command to start it:-

STRTCPSVR SERVER(*DDM)• The following test has been completed which ensures that a connection can be made to the remote system:-

Key in STRSQL

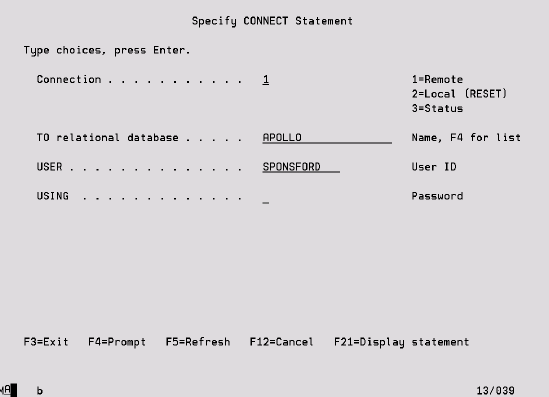

Key in CONNECT, press F4 and fill in the following screen, then press Enter.

You should receive the message ‘Current connection is to relational database XXX’.

Key in DISCONNECT XXX

Key in SET CONNECTION YYY where YYY is the local system

Definition

To specify that the data is to be extracted from a remote system, key in the name of the system in the ‘Remote Database’ field on the Data Case description screen. This is the name of the Relational Database Directory Entry as set up using the ADDRDBDIRE command. F4 can be used to select from a list of valid databases.

Also define whether the data should be transferred automatically to the local system using FTP or if manual intervention will be required in terms of a media transfer. In the latter case, once the data has been extracted on the remote system the Data Run adopts a status of ‘MedReady’. The name of the library containing the data can be found by keying an option 5 for details against the Run. Once this library has been manually restored to the local system, option 13 against the Data Run resumes the extract process and moves the data to the target library. There are some additional features which assist in the automation of the media transfer process:

• A message can be sent to a designated message queue when the data extraction on the remote machine is complete and the library containing the extracted data is ready to be transferred. This is controlled by the system settings which can be found in the Data Case Options area of System Values. See the System chapter for more information.

• Two APIs enable a list of Data Case Runs to be accessed and details of an individual run to be retrieved. Thus a controlling program could be created to monitor for the message stating that there is a run at ‘MedReady’ status and then retrieve the details of the run so that the library can be transferred to the local system. The APIs are called API014R and API015R, please refer to Appendix D for more information.

• The command RESUMEEXT provides a command interface into the resume extract function which is otherwise accessed from Work With Data Case Runs.

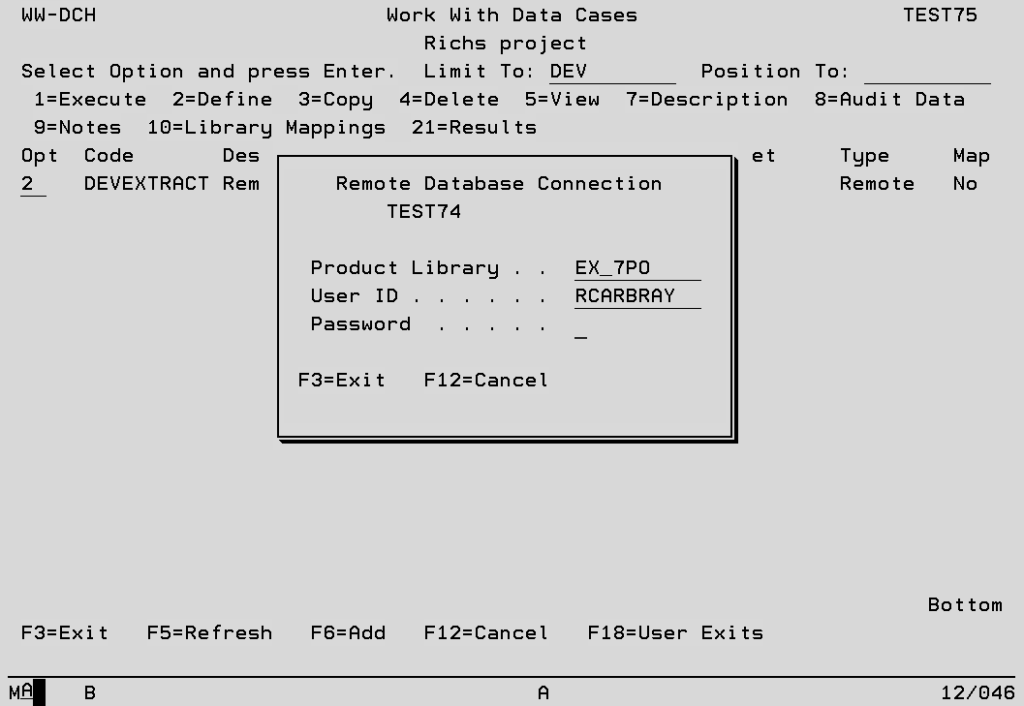

When a remote Data Case is maintained or executed a connection has to be made to the remote system. The default option is to use the User ID for the current session to connect to the remote system, in which case the following screen will be displayed and either the correct password or an alternative User ID and password must be keyed.

Product Library Specify the name of the library on the remote system which contains the extraction functionality. e.g. TB_7PO for TestBench, EX_7PO for Extractor.

The default value is the name of the TestBench or Extractor library on the local system but can be changed in System Setup…Data Case System Options…Remote System Definition.

Data Case System Options – Original Software Help Center

User ID This will be used for the connection to the remote system and defaults to the User ID for the current session.

Password The valid password for the User ID which will be used to make the remote connection.

The standard length is 10 characters.

If your IBM system value is set to accommodate longer passwords,

as of TestBench 8.2, up to 128 characters for the FTP password is supported.

The TestBench screens for the password entry will change based on which password level is specified for the IBM system value QPWDLVL.

The alternative is to set up a standard User Profile to be used for all remote database connections, causing the above screen to be bypassed. See the Data Case Options section of the separate System chapter.

Execution Settings

The Run priority and time slice that will be applied to the remote extract job can be defined in system values – see separate chapter. The original settings are re-applied after the job completes.

Limitations

Due to the more complex methodology which must be used for remote data extraction, there are some limitations which are not present when extracting data on the local system.

• The F14 Sample Data function is not available.

• Alternative File Descriptions can be used, but there must be a copy of the file present on the local system during AFD definition.

• All remote Data Cases must be extraction only, no update or archive functionality can be used.